Automated Strategy Factors

3/13/20263 min read

Automated trading strategies have been popping up in my feed lately.

If someone is selling or offering an automated strategy, all of the following should have been tested and validated. As the potential user, you should be asking for this information before trusting the system.

Here are some important questions to ask:

Was the system built and optimized on the same data used in the backtest?

A proper process separates in-sample and out-of-sample data. Strategies should be developed and optimized on in-sample data, then tested on new, unseen out-of-sample data to confirm robustness. If a strategy only performs well in the exact data it was created on, it is very likely curve-fit and will probably fail in live markets.

Were commissions and slippage included in the backtest?

If commissions and slippage were not modeled, the results are not realistic. Even small costs can dramatically change performance, especially for high-frequency intraday strategies.

Does the backtest assume “fill on touch”?

Some backtests assume that the moment price touches your limit order, you are filled. In real markets this is not guaranteed. Your order sits in a queue, and there must be enough liquidity to fill you. Often price touches and reverses before your order is executed. If a strategy assumes fill on touch, the results are typically overly optimistic.

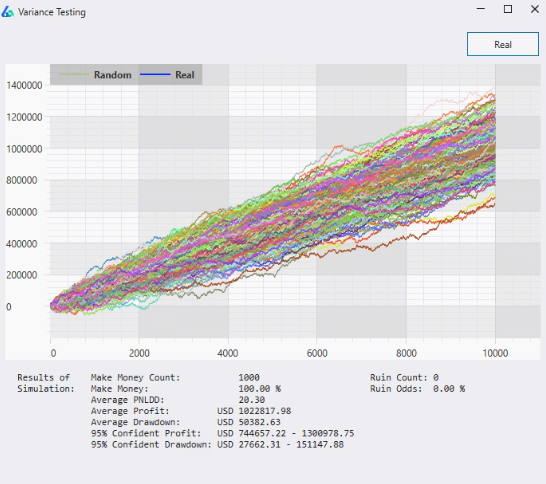

Has Monte Carlo simulation been performed?

Monte Carlo analysis randomizes the order of trades thousands of times to simulate alternate equity curves.

This helps estimate:

•Worst-case drawdown

•Probability of ruin

•Performance variability

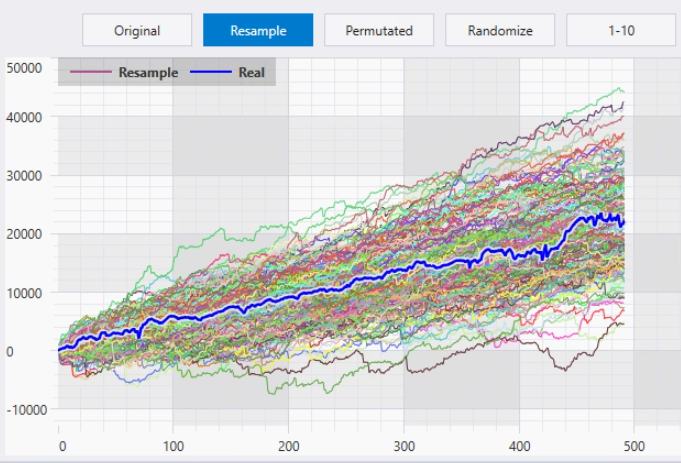

A robust strategy should survive many randomized trade sequences.Has variance/noise testing been conducted?

Robust strategies should not break from tiny environmental changes.

Examples:

•Adjust price data by ±5 points

•Delay entry slightly

•Change an EMA from 20 to 21If small tweaks completely destroy performance, the strategy is likely over-optimized or statistically fragile.Has the strategy been tested across market regimes?

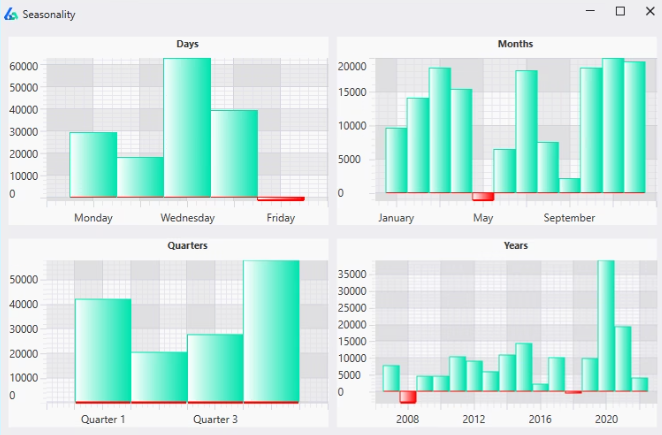

Markets behave differently during, Bull markets, Bear markets, High volatility environments, and Low volatility environments. A strategy may specialize in certain conditions—and that’s fine—but those conditions should be clearly defined.

Are profits stable over time?

Look at the distribution of profits across the backtest period. Are returns relatively consistent year-to-year, or did 90% of the profits occur during one short period? A strategy that claims five years of profitability but made most of its gains in a single month may not be reliable.

Key performance metrics

Some basic metrics to evaluate:

•Expectancy – Positive expectancy is required. Ideally > 0.15R.

•Profit Factor (PF) – Gross profits ÷ gross losses. A PF > 1.5 is generally desirable.

•Trade Count – The more trades in the sample, the more statistically meaningful the results become. A few hundred trades tells you far more than twenty.Live forward testing

This is the part that nearly every strategy creator I see misses...Can the developer provide live forward-tested results, or only simulated backtests? Backtests are useful, but they are still simulations. A strategy that has been running live and producing real results carries far more credibility.

Final Statement:

These are just a few of the things every automated system developer should be able to demonstrate. If they can’t provide this information—or avoid the questions—you should think twice before trusting their strategy.